Precision and recall

-

Precision: Out of all the positive predictions, how many were correct?

-

Recall: Out of all the positive labels, how many were correct?

-

Specificity: Out of all the negative labels, how many were correct?

Confusion matrix

| Predicted Positive | Predicted Negative | |

|---|---|---|

| True Positive | TP | FN |

| True Negative | FP | TN |

- The axes might be reversed, but a good predictor will have strong diagonals

- There's also the F1 score, or harmonic mean of precision and recall:

ROC Curves

-

The receiver operating characteristic curve is a plot of the true positive rate (recall or sensitivity) vs. false positive rate (1 - specificity) as the detection threshold changes

-

The diagonal is the same as random guessing

-

A perfect classifier would hug the top left corner

Fun fact: the name comes from WWII radar operators, where true positives were airplanes and false positives were noise

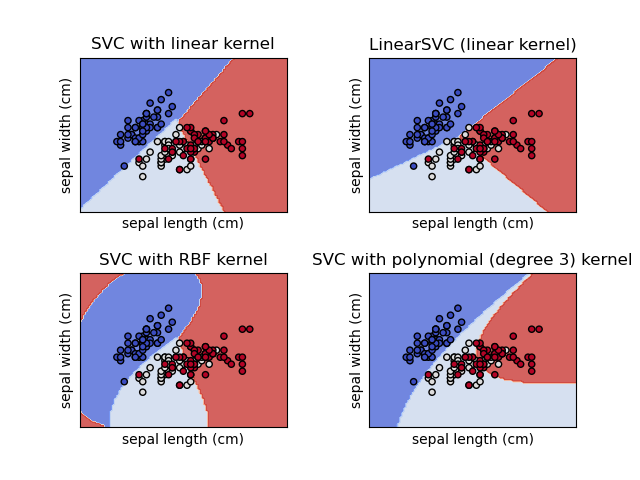

Classification model: Support Vector Classifier

- Linear model that finds vector(s) to best separate classes

- "Kernel trick" allows for nonlinear boundaries

- Check out the SVM Appendix of Hands-on Machine Learning by Aurélien Geron for more info

Classification Model: Decision Trees

- Family of models including:

- decision trees

- random forests

- gradient boosted decision trees

- Finds thresholds for features that best separates classes

- Controllable through depth parameter

Model evaluation: regression

For a predicted

- Mean squared error:

- Root mean squared error:

- Mean absolute error:

- Mean absolute percentage error:

- Coefficient of determination:

(

Regression: ordinary least squares

-

In 1D, estimate modeled as:

-

Vector form:

-

Goal is to minimize the Mean Square Error:

Regression: N-dimensional

-

Most of the time we have

-

N-D:

-

Common to use a design matrix

where each row is an instance (sample) and each column is a feature.

Regression: N-dimensional

-

We can rewrite the estimate in matrix notation:

-

The MSE can be written as:

-

This has a closed form solution, but it is computationally expensive

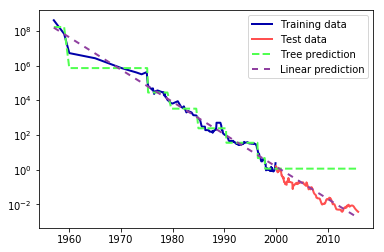

Regression: Decision trees

Figure 2-32. Comparison of predictions made by a linear model and predictions made by a regression tree on the RAM price data. Source: Introduction to Machine Learning with Python

Comparison of model families

Linear models

- Very fast, particularly inference

- Scalable to large number of features

- Can model nonlinearity with kernel trick

- Easy to regularize

- Difficult to interpret

- Sensitive to parameters and preprocessing

- Data needs to be on same scale

- Slow to train on large datasets

Decision Trees

- Highly explainable

- Fast to train

- Few parameters to tune

- Little preprocessing needed

- Provides feature importance

- Prone to overfitting

- High variance

- Poor extrapolation

Coming up next

- Exploring and understanding your data

- Splitting your data

- Repeatability considerations

- Stratified sampling

- Assignment 1: Exploring Calgary traffic data